Ruby

Contents

- 1 High level components of Ruby

- 2 Implementation of Ruby

- 3 Life of a memory request in Ruby

High level components of Ruby

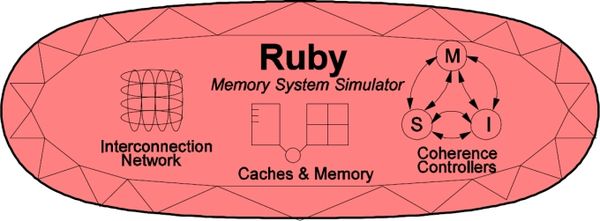

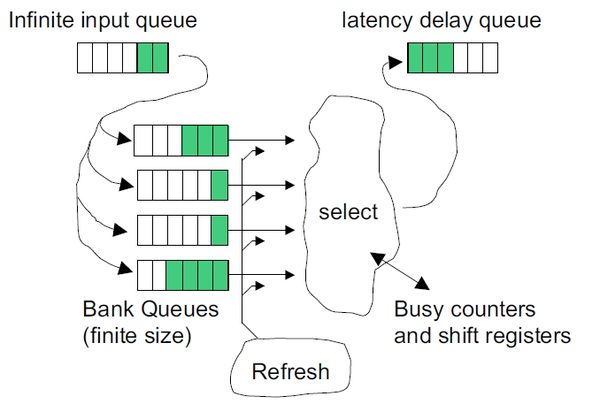

Ruby implements a detailed simulation model for the memory subsystem. It models inclusive/exclusive cache hierarchies with various replacement policies, coherence protocol implementations, interconnection networks, DMA and memory controllers, various sequencers that initiate memory requests and handle responses. The models are modular, flexible and highly configurable. Three key aspects of these models are:

- Separation of concerns -- for example, the coherence protocol specifications are separate from the replacement policies and cache index mapping, the network topology is specified separately from the implementation.

- Rich configurability -- almost any aspect affecting the memory hierarchy functionality and timing can be controlled.

- Rapid prototyping -- a high-level specification language, SLICC, is used to specify functionality of various controllers.

The following picture, taken from the GEMS tutorial in ISCA 2005, shows a high-level view of the main components in Ruby.

SLICC + Coherence protocols:

Need to say what is SLICC and whats its purpose. Talk about high level strcture of a typical coherence protocol file, that SLICC uses to generate code. A simple example structure from protocol like MI_example can help here.

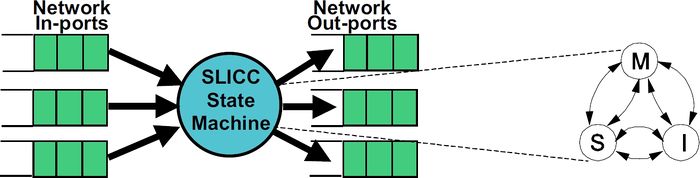

SLICC stands for Specification Language for Implementing Cache Coherence. It is a domain specific language that is used for specifying cache coherence protocols. In essence, a cache coherence protocol behaves like a state machine. SLICC is used for specifying the behavior of the state machine. Since the aim is to model the hardware as close as possible, SLICC imposes constraints on the state machines that can be specified. For example, SLICC can impose restrictions on the number of transitions that can take place in a single cycle. Apart from protocol specification, SLICC also combines together some of the components in the memory model. As can be seen in the following picture, the state machine takes its input from the input ports of the inter-connection network and queues the output at the output ports of the network, thus tying together the cache / memory controllers with the inter-connection network itself.

Protocol independent memory components

- Cache Memory

- Replacement Policies

- Memory Controller

Arka will do it

Interconnection Network

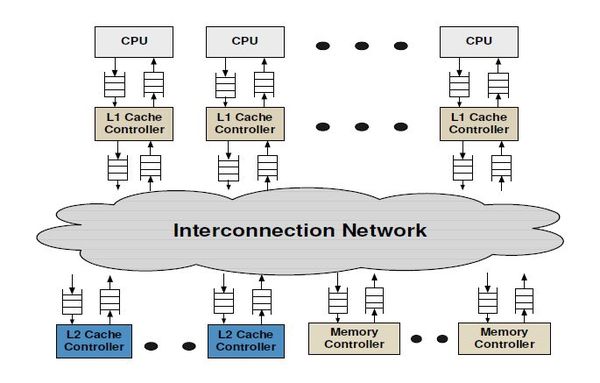

The interconnection network connects the various components of the memory hierarchy (cache, memory, dma controllers) together.

The key components of an interconnection network are:

- Topology

- Routing

- Flow Control

- Router Microarchitecture

More details about the network model implementation are described here

Implementation of Ruby

Directory Structure

- src/mem/

- protocols: SLICC specification for coherence protocols

- slicc: implementation for SLICC parser and code generator

- ruby

- buffers: implementation for message buffers that are used for exchanging information between the cache, directory, memory controllers and the interconnect

- common: frequently used data structures, e.g. Address (with bit-manipulation methods), histogram, data block, basic types (int32, uint64, etc.)

- eventqueue: Ruby’s event queue API for scheduling events on the gem5 event queue

- filters: various Bloom filters (stale code from GEMS)

- network: Interconnect implementation, sample topology specification, network power calculations

- profiler: Profiling for cache events, memory controller events

- recorder: Cache warmup and access trace recording

- slicc_interface: Message data structure, various mappings (e.g. address to directory node), utility functions (e.g. conversion between address & int, convert address to cache line address)

- system: Protocol independent memory components – CacheMemory, DirectoryMemory, Sequencer, RubyPort

Protocols

Protocol Independent Memory components

System

This is a high level container for few of the important components of the Ruby which may need to be accessed from various parts and components of Ruby. Only ONE instance of this class is created. The instance of this class is globally available through a pointer named g_system_ptr. It holds pointer to the Ruby's profiler object. This allows any component of Ruby to get hold of the profiler and collect statistics in a central location by accessing it though g_system_ptr. It also holds important information about the memory hierarchy like Cache blocks size, Physical memory size and makes them available to all parts of Ruby as and when required. It also holds the pointer to the on-chip network in Ruby. Another important objects that it hold pointer to is the Ruby's wrapper for the simulator event queue (called RubyEventQueue). It also contains pointer to the simulated physical memory (pointed by variable name m_mem_vec_ptr). Thus in sum, System class in Ruby just acts as a container to pointers to some important objects of Ruby's memory system and makes them available globally to all places in Ruby through exposing it self through g_system_ptr.

Parameters

- random_seed is seed to randomize delays in Ruby. This allows simulating multiple runs with slightly perturbed delays/timings.

- randomization is the parameter when turned on, asks Ruby to randomly delay messages. This falg is useful when stress testing a system to expose corner cases. This flag should NOT be turned on when collecting simulation statistics.

- clock is the parameter for setting the clock frequency (on-chip).

- block_size_bytes specifies the size of cache blocks in bytes.

- mem_size specifies the physical memory size of the simulated system.

- network gives the pointer to the on-chip network for Ruby.

- profiler gives the pointer to the profiler of Ruby.

- tracer is the pointer to the Ruby 's memory request tracer. Tracer is primarily used to playback memory request trace in order to warm up Ruby's caches before actual simulation starts.

Related files

- src/mem/ruby/system

- System.hh/cc: contains code for the System

- RubySystem.py :the corresponding python file with parameters.

Sequencer

Sequencer is one of the most important classes in Ruby, through which every memory request must pass through at least twice -- once before getting serviced by the cache coherence mechanism and once just after being serviced by the cache coherence mechanism. There is one instantiation of Sequencer class for each of the hardware thread being simulated. For example, if we are simulating a 16-core system with each core has single hardware thread context, then there would be 16 Sequencer object in the system, with each being responsible for managing memory requests (Load, Store, Atomic operations etc) from one of the given hardware thread context. ith Sequencer object handles request from only ith hardware context (in case of above example it is ith core). Each Sequencer assumes that it has access to the L1 Instruction and Data caches that are attached to a given core.

Following are the primary responsibilities of Sequencer:

- Injecting and accounting for all memory request to the underlying cache hierarchy (and coherence).

- Resource allocation and accounting (e.g. makes sure a particular core/hardware thread does not have more than specified number of outstanding memory requests).

- Making sure atomic operations are handled properly.

- Making sure that underlying cache hierarchy and coherence protocol is making forward progress.

- Once a request is serviced by the underlying cache hierarchy, Sequencer is responsible for returning the result to the corresponding port of the frontend (i.e.M5).

Parameters

- icache is the parameter where the L1 Instruction cache pointer is passed.

- dcache is the parameter where the L1 Data cache pointer is passed.

- max_outstanding_requests is the parameter for specifying maximum allowed number of outstanding memory request from a given core or hardware thread.

- deadlock_threshold is the parameter that specifies number of cycle (ruby cycles) after which if a given memory request is not satisfied by the cache hierarchy, a possibility of deadlock (or lack of forward progress) is declared.

Related files

- src/mem/ruby/system

- Sequencer.hh/cc: contains code for the Sequencer

- Sequencer.py :the corresponding python file with parameters.

More detailed operation description

In this section, we will describe the operations of Sequencer in more details.

- Injection of memory request to cache hierarchy, request accounting and resource allocation:

- The entry point for a memory request to the Sequencer is method called makeRequest. This method is called from the corresponding RubyPort (Ruby's wrapper for M5 port). Once it gets the request, Sequencer checks for whether required resource limitations need to be enforced or not. This is done by calling a method called getRequestStatus. In this method, it is made sure that a given Sequencer (i.e. a core or hardware thread context) can does NOT issue multiple simultaneous requests to same cache block. If the current request indeed for a cache block for which another request is still pending from the same Sequencer then the current request is not issued to the cache hierarchy and instead the current request wait for the previous request from the same cache block to satisfied first. This is done in the code by checking for two requesting accounting table called m_writeRequestTable and m_readRequestTable and setting the status to RequestSatus_Aliased. It also makes sure that number of outsatnding memory request from a given Sequencer does not overshoot.

- If it is found that the current request won't violate any of the above constrained then the request is registered for accounting purposes. This is done by calling the method named insertRequest. In this method, depending upon the type of the request, an entry for the request is created in the either m_writeRequestTable or m_readRequestTable. These two tables keep record for write request and read requests, respectively, that are issued to the cache hierarchy by the given Sequencer but still to be satisfied. Finally the memory request is finally pushed to the cache hierarchy by calling the method named issueRequest. This method is responsible of creating the request structure that is understood by the underlying SLICC generated coherence protocol implementation and cache hierarchy. This is done by setting the request type and mode accordingly and creating an object of class RubyRequest for the current request. Finally, L1 Instruction or L1 Data cache accesses latencies are accounted for an the request is pushed to the Cache hierarchy and the coherence mechanism for processing. This is done by enqueue-ing the request to the pointer to the mandatory queue (m_mandatory_q_ptr). Every request is passed to the corresponding L1 Cache controller through this mandatory queue. Cache hierarchy is then responsible for satisfying the request.

- Deadlock/lack of forward progress detection:

- As mentioned earlier, one other responsibility of the Sequencer is to make sure that Cache hierarchy is making progress in servicing the memory requests that have been issued. This is done by periodically waking up and scanning through the m_writeRequestTable and m_readRequestTable tables. which holds the currently outstanding requests from the given Sequencer and finds out which requests have been issued but have not satisfied by the cache hierarchy. If it finds any unsatisfied request that have been issues more than m_deadlock_threshold (parameter) cycles back, it reports a possible deadlock by the Cache hierarchy. Note that, although it reports possible deadlock, it actually detects lack of forward progress. Thus there may be false positives in this deadlock detection mechanism.

- Send back result to the front-end and making sure Atomic operations are handled properly:

- Once the Cache hierarchy satisfies a request it calls Sequencer's readCallback or writeCallback method depending upon the type of the request. Note that the time taken to service the request is automatically accounted for through event scheduling as the readCallback or writeCallback'' are called only after number of cycles required to satisfy the request has been accounted for. In these two methods the corresponding record of the request from the m_readRequestTable or m_writeRequestTable is removed. Also if the request was found to be part of a Atomic operations (e.g. RMW or LL/SC), then appropriate actions are taken to make sure that semantics of atomic operations are respected.

- After that a method called hitCallback is called. In this method, some statics is collected by calling functions on the Ruby's profiler. For write request, the data is actually updated in this function (and not while simulating the request through the cache hierarchy and coherence protocol). Finally ruby_hit_callback is called ultimately sends back the packet to the front-end, signifying completion of the processing of the memory request from Ruby's side.

CacheMemory and Cache Replacement Polices

This module can model any Set-associative Cache structure with a given associativity and size. Each instantiation of the following module models a single bank of a cache. Thus different types of caches in system (e.g. L1 Instruction, L1 Data , L2 etc) and every banks of a cache needs to have separate instantiation of this module. This module can also model Fully associative cache when the associativity is set to 1. In Ruby memory system, this module is primarily expected to be accessed by the SLICC generated codes of the given Coherence protocol being modeled.

Basic Operation

This module models the set-associative structure as a two dimensional (2D) array. Each row of the 2D array represents a set of in the set-associative cache structure, while columns represents ways. The number of columns is equal to the given associativity (parameter), while the number of rows is decided depending on the desired size of the structure (parameter), associativity (parameter) and the size of the cache line (parameter). This module exposes six important functionalities which Coherence Protocols uses to manage the caches.

- It allows to query if a given cache line address is present in the set-associative structure being modeled through a function named isTagPresent. This function returns true, iff the given cache line address is present in it.

- It allows a lookup operation which returns the cache entry for a given cache line address (if present), through a function named lookup. It returns NULL if the blocks with given address is not present in the set-associative cache structure.

- It allows to allocate a new cache entry in the set-associative structure through a function named allocate.

- It allows to deallocate a cache entry of a given cache line address through a function named deallocate.

- It can be queried to find out whether to allocate an entry with given cache line address would require replacement of another entry in the designated set (derived from the cache line address) or not. This functionality is provided through cacheAvail function, which for a given cache line address, returns True, if NO replacement of another entry the same set as the given address is required to make space for a new entry with the given address.

- The function cacheProbe is used to find out cache line address of a victim line, in case placing a new entry would require victimizing another cache blocks in the same set. This function returns the cache line address of the victim line given the address of the address of the new cache line that would have to be allocated.

Parameters

There are four important parameters for this class.

- size is the parameter that provides the size of the set-associative structure being modeled in units of bytes.

- assoc specifies the set-associativity of the structure.

- replacement_policy is the name of the replacement policy that would be used to select victim cache line when there is conflict in a given set. Currently, only two possible choices are available (PSEUDO_LRU and LRU).

- Finally, start_index_bit parameter specifies the bit position in the address from where indexing into the cache should start. This is a tricky parameter and if not set properly would end up using only portion of the cache capacity. Thus how this value should be specified is explained through couple of examples. Let us assume the cache line size if 64 bytes and a single core machine with a L1 cache with only one bank and a L2 cache with 4 banks. For the CacheMemory module that would model the L1 cache should have start_index_bit set to log2(64) = 6 (this is the default value assuming 64 bytes cache line). This is required as addresses passed around in the Ruby is full address (i.e. equal to the number of bits required to access any address in the physical address range) and as the caches would be accessed in granularity of cache line size (here 64 bytes), the lower order 6 bits in the address would be essentially 0. So we should discard last 6 bits of the given address while calculating which set (index) in the set associative structure the given address should go to. Now let's look into a more complicated case of L2 cache, which has 4 banks. As mentioned previously, this modules models a single bank of a set-associative cache. Thus there will be four instantiation of the CacheMemory class to model the whole L2 cache. Assuming which cache bank a request goes to is statically decided by the low oder log2(4) = 2 bits of the cache line address, the value of the bits in the address at the position 6 and 7 would be same for all accesses coming to a given bank (i.e. a instance of CacheMemory here). Thus indexing within the set associative structure (CacheMemory instance) modeling a given bank should use address bits 8 and higher for finding which set a cache block should go to. Thus start_index_bit parameter should be set to 8 for the banks of L2 in this example. If erroneously if this is set 6, only a fourth of desired L2 capacity would be utilized !!!

More detailed description of operation

As mentioned previously, the set-associative structure is modeled as a 2D array in the CacheMemory class. The variable m_cache is this all important 2D array containing the set-associative structure. Each element of this 2D array is derived from type AbstractCacheEntry class. Beside the minimal required functionality and contents of each cache entry, it can be extended inside the Coherence protocol files. This allows CacheMemory to be generic enough to hold any type of cache entry as desired by a given Coherence protocol as long as it derives from AbstractCacheEntry interface. The m_cache 2D array has number of rows equal to the number of sets in the set-associative structure being modeled while the number of columns is equal to associativity.

As should happen in any set-associative structure, which set (row) a cache entry should reside is decided by part of the cache block address used for indexing. The function addressToCacheSet calculates this index given an address. The way in which a cache entry reside in its designated set (row) is noted in the a hash_map object named m_tag_index. So to access an cache entry in the set-associative structure, first the set number where the cache block should reside is calculated and then m_tag_index is looked-up to find out the way in which the required cache block resides. If an cache entry holds invalid entry or its empty then its set to NULL or its permission is set to NotPresent.

One important aspect of the Ruby's caches are the segregation of the set-associative structure for the cache and its replacement policy. This allows modular design where structure of the cache is independent of the replacement policy in the cache. When a victim needs to be selected to make space for a new cache block (by calling cacheProbe function), getVictim function of the class implementing replacement policy is called for the given set. getVictim returns the way number of the victim. The replacement policy is updated about accesses by calling touch function of the replacement policy, which allows it to update the access recency. Currently there are two replacement policies are supported -- LRU and PseudoLRU. LRU policy has a straight forward implementation where it keeps track of the absolute time when each way within each set is accessed last time and it always victimizes the entry which was last accessed furthest back in time. PseudoLRU implements a binary-tree based Non-Recently-Used policy. It arranges the ways in each set in an implicit binary tree like structure. Every node of the binary tree encodes the information which of its two subtrees was accessed more recently. During victim selection process, it starts from the root of the tree and traverse down such that it chooses the subtree which was touched less recently. Traversal continues until it reaches a leaf node. It then returns the id of the leaf node reached.

Related files

- src/mem/ruby/system

- CacheMemory.cc: contains CacheMemory class which models a cache bank

- CacheMemory.hh: Interface for the CacheMemory class

- Cache.py: Python configuration file for CacheMemory class

- AbstractReplacementPolicy.hh: Generic interface for Replacement policies for the Cache

- LRUPolicy.hh: contains LRU replacement policy

- PseudoLRUPolicy.hh: contains Pseudo-LRU replacement policy

- src/mem/ruby/slicc_interface

- AbstarctCacheEntry.hh: contains the interface of Cache Entry

DMASequencer

This module implements handling for DMA transfers. It is derived from the RubyPort class. There can be a number of DMA controllers that interface with the DMASequencer. The DMA sequencer has a protocol-independent interface and implementation. The DMA controllers are described with SLICC and are protocol-specific.

TODO: Fix documentation to reflect latest changes in the implementation.

Note:

- At any time there can be only 1 request active in the DMASequencer.

- Only ordinary load and store requests are handled. No other request types such as Ifetch, RMW, LL/SC are handled

Related Files

- src/mem/ruby/system

- DMASequencer.hh: Declares the DMASequencer class and structure of a DMARequest

- DMASequencer.cc: Implements the methods of the DMASequencer class, such as request issue and callbacks.

Configuration Parameters

Currently there are no special configuration parameters for the DMASequencer.

Basic Operation

A request for data transfer is split up into multiple requests each transferring cache-block-size chunks of data. A request is active as long as all the smaller transfers are not completed. During this time, the DMASequencer is in a busy state and cannot accepts any new transfer requests.

DMA requests are made through the makeRequest method. If the sequencer is not busy and the request is of the correct type (LD/ST), it is accepted. A sequence of requests for smaller data chunks is then issued. The issueNext method issues each of the smaller requests. A data/acknowledgment callback signals completion of the last transfer and triggers the next call to issueNext as long as all of the original data transfer is not complete. There is no separate event scheduler within the DMASequencer.

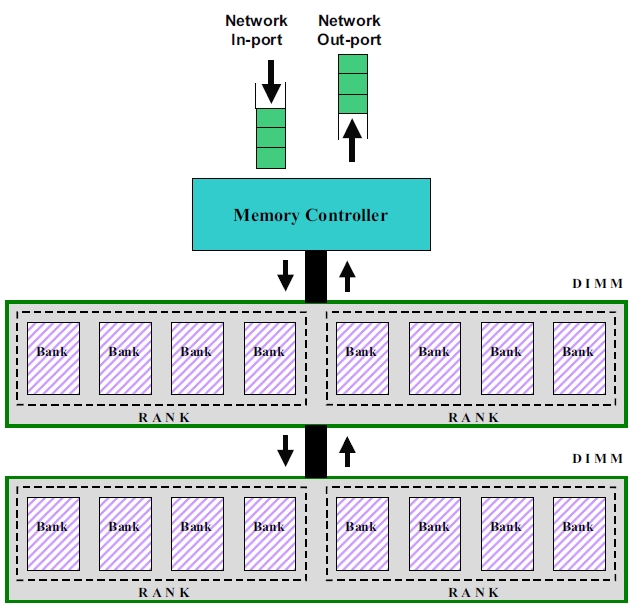

Memory Controller

This module simulates a basic DDR-style memory controller. It models a single channel, connected to any number of DIMMs with any number of ranks of DRAMs each. The following picture shows an overview of the memory organization and connections to the memory controller. General information about memory controllers can be found here.

Note:

- The product of the memory bus cycle multiplier, memory controller latency, and clock cycle time(=1/processor frequency) gives a first-order approximation of the latency of memory requests in time. The Memory Controller module refines this further by considering bank & bus contention, queueing effects of finite queues, and refreshes.

- Data sheet values for some components of the memory latency are specified in time (nanoseconds), whereas the Memory Controller module expects all delay configuration parameters in cycles. The parameters should be set appropriately taking into account the processor and bus frequencies.

- The current implementation does not consider pin-bandwidth contention. Infinite bandwidth is assumed.

- Only closed bank policy is currently implemented; that is, each bank is automatically closed after a single read or write.

- The current implementation handles only a single channel. If you want multiple address/data channels, you need to instantiate multiple copies of this module.

- This is the only controller that is NOT specified in SLICC, but in C++.

Documentation source: Most (but not all) of the writeup in this section is taken verbatim from documentation in the gem5 source files, rubyconfig.defaults file of GEMS, and a ppt created by Andy Phelps on Jan 18, 2008.

Related Files

- src/mem/ruby/system

- MemoryControl.hh: This file declares the Memory Controller class.

- MemoryControl.cc: This file implements all the operations of the memory controller. This includes processing of input packets, address decoding and bank selection, request scheduling and contention handling, handling refresh, returning completed requests to the directory controller.

- MemoryControl.py: Configuration parameters

Configuration Parameters

- dimms_per_channel: Currently the only thing that matters is the number of ranks per channel, i.e. the product of this parameter and ranks_per_dimm. But if and when this is expanded to do FB-DIMMs, the distinction between the two will matter.

- Address Mapping: This is controlled by configuration parameters banks_per_rank, bank_bit_0, ranks_per_dimm, rank_bit_0, dimms_per_channel, dimm_bit_0. You could choose to have the bank bits, rank bits, and DIMM bits in any order. For the default values, we assume this format for addresses:

- Offset within line: [5:0]

- Memory controller #: [7:6]

- Bank: [10:8]

- Rank: [11]

- DIMM: [12]

- Row addr / Col addr: [top:13]

If you get these bits wrong, then some banks won't see any requests; you need to check for this in the .stats output.

- mem_bus_cycle_multiplier: Basic cycle time of the memory controller. This defines the period which is used as the memory channel clock period, the address bus bit time, and the memory controller cycle time. Assuming a 200 MHz memory channel (DDR-400, which has 400 bits/sec data), and a 2 GHz processor clock, mem_bus_cycle_multiplier=10.

- mem_ctl_latency: Latency to returning read request or writeback acknowledgement. Measured in memory address cycles. This equals tRCD + CL + AL + (four bit times) + (round trip on channel) + (memory control internal delays). It's going to be an approximation, so pick what you like. Note: The fact that latency is a constant, and does not depend on two low-order address bits, implies that our memory controller either: (a) tells the DRAM to read the critical word first, and sends the critical word first back to the CPU, or (b) waits until it has seen all four bit times on the data wires before sending anything back. Either is plausible. If (a), remove the "four bit times" term from the calculation above.

- rank_rank_delay: This is how many memory address cycles to delay between reads to different ranks of DRAMs to allow for clock skew.

- read_write_delay: This is how many memory address cycles to delay between a read and a write. This is based on two things: (1) the data bus is used one cycle earlier in the operation; (2) a round-trip wire delay from the controller to the DIMM that did the reading. Usually this is set to 2.

- basic_bus_busy_time: Basic address and data bus occupancy. If you are assuming a 16-byte-wide data bus (pairs of DIMMs side-by-side), then the data bus occupancy matches the address bus occupancy at 2 cycles. But if the channel is only 8 bytes wide, you need to increase this bus occupancy time to 4 cycles.

- mem_random_arbitrate: By default, the memory controller uses round-robin to arbitrate between ready bank queues for use of the address bus. If you wish to add randomness to the system, set this parameter to one instead, and it will restart the round-robin pointer at a random bank number each cycle. If you want additional nondeterminism, set the parameter to some integer n >= 2, and it will in addition add a n% chance each cycle that a ready bank will be delayed an additional cycle. Note that if you are in mem_fixed_delay mode (see below), mem_random_arbitrate=1 will have no effect, but mem_random_arbitrate=2 or more will.

- mem_fixed_delay: If this is nonzero, it will disable the memory controller and instead give every request a fixed latency. The nonzero value specified here is measured in memory cycles and is just added to MEM_CTL_LATENCY. It will also show up in the stats file as a contributor to memory delays stalled at head of bank queue.

- tFAW: This is an obscure DRAM parameter that says that no more than four activate requests can happen within a window of a certain size. For most configurations this does not come into play, or has very little effect, but it could be used to throttle the power consumption of the DRAM. In this implementation (unlike in a DRAM data sheet) TFAW is measured in memory bus cycles; i.e. if TFAW = 16 then no more than four activates may happen within any 16 cycle window. Refreshes are included in the activates.

- refresh_period: This is the number of memory cycles between refresh of row x in bank n and refresh of row x+1 in bank n. For DDR-400, this is typically 7.8 usec for commercial systems; after 8192 such refreshes, this will have refreshed the whole chip in 64 msec. If we have a 5 nsec memory clock, 7800 / 5 = 1560 cycles. The memory controller will divide this by the total number of banks, and kick off a refresh to somebody every time that amount is counted down to zero. (There will be some rounding error there, but it should have minimal effect.)

- Typical Settings for configuration parameters: The default values are for DDR-400 assuming a 2GHz processor clock. If instead of DDR-400, you wanted DDR-800, the channel gets faster but the basic operation of the DRAM core is unchanged. Busy times appear to double just because they are measured in smaller clock cycles. The performance advantage comes because the bus busy times don't actually quite double. You would use something like these values:

- mem_bus_cycle_multiplier: 5

- bank_busy_time: 22

- rank_rank_delay: 2

- read_write_delay: 3

- basic_bus_busy_time: 3

- mem_ctl_latency: 20

- refresh_period: 3120

Basic Operation

- Data Structures

Requests are enqueued into a single input queue. Responses are dequeued from a single response queue. There is a single bank queue for each DRAM bank (the total number of banks is the number of DIMMs per channel x number of ranks per DIMM x number of banks per rank). Each bank also has a busy counter. tFAW shift registers are maintained per rank.

- Timing

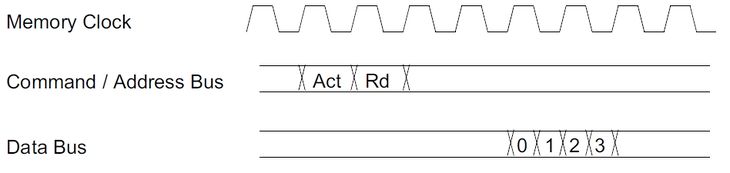

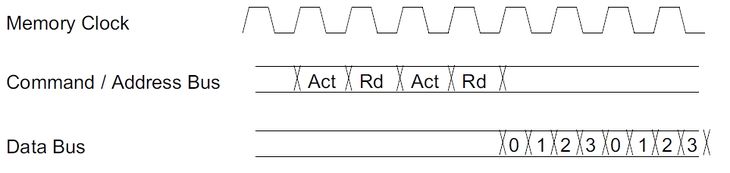

The “Act” (Activate) and “Rd” (Read) commands (or activate and write) always come as a pair, because we are modeling posted-CAS mode. (In non-posted-CAS, the read or write command would be scheduled separately later.) We do not explicitly model the separate commands; we simply say that the address bus occupancy is 2 cycles.

Since the data bus is also occupied for 2 cycles at a fixed offset in time as shown above, we do not need to explicitly model it; memory channel occupancy is still 2 cycles.

For back-to-back requests the data for the 2nd request could be delayed due to the following reasons:

- Read happens from a different rank

- Read is followed by a write

- 2nd request has a busy bank

- Basic request time > 2 (e.g. needs 8 data phits)

- Scheduling and Bank Contention

The wakeup function, and in turn, the executeCycle function is tiggered once every memory clock cycle.

Each memory request is placed in a queue associated with a specific memory bank. This queue is of finite size; if the queue is full the request will back up in an (infinite) common queue and will effectively throttle the whole system. This sort of behavior is intended to be closer to real system behavior than if we had an infinite queue on each bank. If you want the latter, just make the bank queues unreasonably large.

The head item on a bank queue is issued when all of the following are true:

- The bank is available

- The address path to the DIMM is available

- The data path to or from the DIMM is available

Note that we are not concerned about fixed offsets in time. The bank will not be used at the same moment as the address path, but since there is no queue in the DIMM or the DRAM it will be used at a constant number of cycles later, so it is treated as if it is used at the same time.

We are assuming "posted CAS"; that is, we send the READ or WRITE immediately after the ACTIVATE. This makes scheduling the address bus trivial; we always schedule a fixed set of cycles. For DDR-400, this is a set of two cycles; for some configurations such as DDR-800 the parameter tRRD forces this to be set to three cycles.

We assume a four-bit-time transfer on the data wires. This is the minimum burst length for DDR-2. This would correspond to (for example) a memory where each DIMM is 72 bits wide and DIMMs are ganged in pairs to deliver 64 bytes at a shot.This gives us the same occupancy on the data wires as on the address wires (for the two-address-cycle case).

The only non-trivial scheduling problem is the data wires. A write will use the wires earlier in the operation than a read will; typically one cycle earlier as seen at the DRAM, but earlier by a worst-case round-trip wire delay when seen at the memory controller. So, while reads from one rank can be scheduled back-to-back every two cycles, and writes (to any rank) scheduled every two cycles, when a read is followed by a write we need to insert a bubble. Furthermore, consecutive reads from two different ranks may need to insert a bubble due to skew between when one DRAM stops driving the wires and when the other one starts. (These bubbles are parameters.)

This means that when some number of reads and writes are at the heads of their queues, reads could starve writes, and/or reads to the same rank could starve out other requests, since the others would never see the data bus ready. For this reason, we have implemented an anti-starvation feature. A group of requests is marked "old", and a counter is incremented each cycle as long as any request from that batch has not issued. If the counter reaches twice the bank busy time, we hold off any newer requests until all of the "old" requests have issued.

Interconnection Network

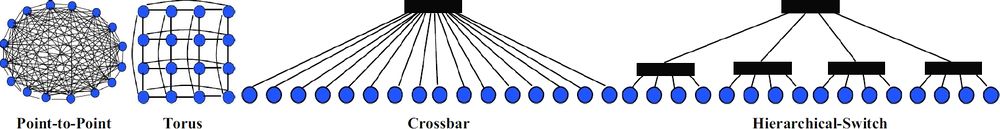

The various controllers of the memory hierarchy (L1/L2 caches, Directory, DMA etc), specified by the coherence protocol, are connected together via an interconnection network. The primary components of this network are

- Topology

- Routing

- Flow-Control

- Router Microarchitecture

Topology

The connection between the various controllers are specified via python files.

- Related Files:

- src/mem/ruby/network/topologies/Pt2Pt.py

- src/mem/ruby/network/topologies/Crossbar.py

- src/mem/ruby/network/topologies/Mesh.py

- src/mem/ruby/network/topologies/MeshDirCorners.py

- src/mem/ruby/network/Network.py

- Topology Descriptions:

- Pt2Pt: Each controller (L1/L2/Directory) is connected to every other controller via a direct link. This can be invoked from command line by --topology=Pt2Pt.

- Crossbar: Each controller (L1/L2/Directory) is connected to every other controller via one switch (modeling the crossbar). This can be invoked from command line by --topology=Crossbar.

- Mesh: This topology requires the number of directories to be equal to the number of cpus. The number of routers/switches is equal to the number of cpus in the system. Each router/switch is connected to one L1, one L2 (if present), and one Directory. It can be invoked from command line by --topology=Mesh. The number of rows in the mesh has to be specified by --mesh-rows. This parameter enables the creation of non-symmetrical meshes too.

- MeshDirCorners: This topology requires the number of directories to be equal to 4. number of routers/switches is equal to the number of cpus in the system. Each router/switch is connected to one L1, one L2 (if present). Each corner router/switch is connected to one Directory. It can be invoked from command line by --topology=MeshDirCorners. The number of rows in the mesh has to be specified by --mesh-rows.

- Optional parameters specified by the topology files (defaults in Network.py):

- latency: latency of traversal within the link.

- weight: weight associated with this link. This parameter is used by the routing table while deciding routes, as explained next in Routing.

- bw_multiplier: used by simple network to model different link bandwidths. This parameter is specified in 1000th of a byte, and the individual link bandwidth = bw_multiplier x endpoint_bandwidth (specified in Network.py).

Routing

Based on the topology, shortest path graph traversals are used to populate routing tables at each router/switch. The default routing algorithm tries to choose the route with minimum number of link traversals. Links can be given weights in the topology files to model different routing algorithms. For example, in Mesh.py and MeshDirCorners.py Y-direction links are given weights of 2, while X-direction links are given weights of 1, resulting in XY traversals in a mesh. adaptive_routing (in src/mem/ruby/network/SimpleNetwork.py) can be enabled to make the simple network choose routes based on occupancy of queues at each output port.

Flow-Control and Router Microarchitecture

Ruby supports two network models, Simple and Garnet, which trade-off detailed modeling versus simulation speed respectively.

- Related Files:

- src/mem/ruby/network/Network.py

- src/mem/ruby/network/simple

- src/mem/ruby/network/garnet/BaseGarnetNetwork.py

- src/mem/ruby/network/garnet/fixed-pipeline

- src/mem/ruby/network/garnet/flexible-pipeline

Configuration and Setup

The default network model in Ruby is the simple network. Garnet fixed-pipeline or flexible-pipeline networks can be enabled by adding --garnet-network=fixed, or --garnet-network=flexible on the command line, respectively.

- Configuration:

Some of the network parameters specified in Network.py are:

- number_of_virtual_networks: This is the maximum number of virtual networks. The actual number of active virtual networks is determined by the protocol.

- control_msg_size: The size of control messages in bytes. Default is 8. m_data_msg_size in Network.cc is set to the block size in bytes + control_msg_size.

- link_latency: Latency of each link in cycles. This can be specified for each link from the topology files. Default is 1.

Simple Network

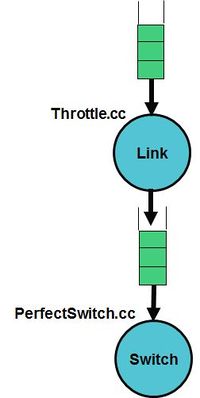

The simple network models hop-by-hop network traversal, but abstracts out detailed modeling within the switches. The switches are modeled in simple/PerfectSwitch.cc while the links are modeled in simple/Throttle.cc. The flow-control is implemented by monitoring the available buffers and available bandwidth in output links before sending.

- Configuration:

Simple network uses the generic network parameters in Network.py. Additional parameters are specified in SimpleNetwork.py:

- buffer_size: Size of buffers at each switch input and output ports. A value of 0 implies infinite buffering.

- endpoint_bandwidth: Bandwidth at the end points of the network in 1000th of byte.

- bw_multiplier: Bandwidth specified in 1000th of byte. The individual link bandwidth becomes bw_multipler x endpoint_bandwidth.

- adaptive_routing: This enables adaptive routing based on occupancy of output buffers.

Garnet Networks

Garnet is a detailed interconnection network model inside GEM5. It consists of a detailed fixed-pipeline model, and an approximate flexible-pipeline model.

The fixed-pipeline model is intended for low-level interconnection network evaluations and models the detailed micro-architectural features of a 5-stage Virtual Channel router with credit-based flow-control. Researchers interested in investigating different network microarchitectures can readily modify the modeled microarchitecture and pipeline. Also, for system level evaluations that are not concerned with the detailed network characteristics, this model provides an accurate network model and should be used as the default model.

The flexible-pipeline model is intended to provide a reasonable abstraction of all interconnection network models, while allowing the router pipeline depth to be flexibly adjusted. A router pipeline might range from a single cycle to several cycles. For evaluations that wish to easily change the router pipeline depth, the flexible-pipeline model provides a neat abstraction that can be used.

If your use of Garnet contributes to a published paper, please cite the research paper which can be found here.

- Configuration

Garnet uses the generic network parameters in Network.py. Additional parameters are specified in BaseGarnetNetwork.py:

- flit_size: flit size in bytes. Flits are the granularity at which information is sent from one router to the other. Default is 16 (=> 128 bits). [This default value of 16 results in control messages fitting within 1 flit, and data messages fitting within 5 flits].

- vcs_per_class: number of virtual channels (VC) per message class (Note: message class = virtual network). Default is 4.

The following are only valid for fixed-pipeline:

- buffers_per_data_vc: number of flit-buffers per VC in the data message class. Since data messages occupy 5 flits, this value can lie between 1-5. Default is 4.

- buffers_per_ctrl_vc: number of flit-buffers per VC in the control message class. Since control messages occupy 1 flit, and a VC can only hold one message at a time, this value has to be 1. Default is 1.

Note: garnet assumes that ctrl messages are 1-flit wide. If ctrl flits occupy more than one flit (due to smaller flit-size, or larger control_msg_size), all VCs are given buffers_per_data_vc number of flit-buffers.

The following are only valid for flexible-pipeline:

- number_of_pipe_stages: number of pipeline stages in each router in the flexible-pipeline model. Default is 4.

- Additional features

- Routing: Currently, garnet only models deterministic routing using the routing tables described earlier.

- Modeling variable link bandwidth: The flit size specifies the link bandwidth as the number of bytes per cycle per network link. Links which have lower bandwidth than this (for instance some off-chip links) can be modeled by specifying a longer latency across them in the topology file (as explained earlier).

- Multicast messages: The network modeled does not have hardware multi-cast support within the network. A multi-cast message gets broken into multiple uni-cast messages at the interface to the network.

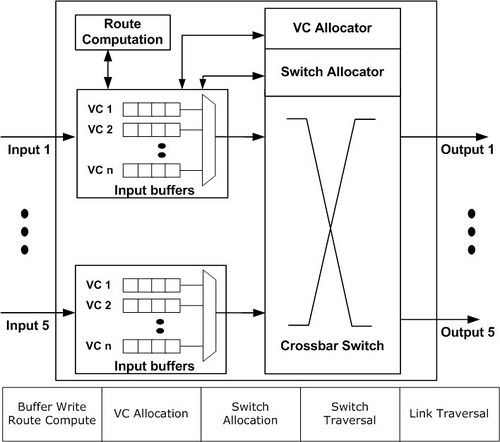

- Garnet fixed-pipeline network

The garnet fixed-pipeline models a classic 5-stage Virtual Channel router. The 5-stages are:

- Buffer Write (BW) + Route Compute (RC): The incoming flit gets buffered and computes its output port.

- VC Allocation (VA): All buffered flits allocate for VCs at the next routers. [The allocation occurs in a separable manner: First, each input VC chooses one output VC, choosing input arbiters, and places a request for it. Then, each output VC breaks conflicts via output arbiters]. All arbiters in ordered virtual networks are queueing to maintain point-to-point ordering. All other arbiters are round-robin.

- Switch Allocation (SA): All buffered flits try to reserve the switch ports for the next cycle. [The allocation occurs in a separable manner: First, each input chooses one input VC, using input arbiters, which places a switch request. Then, each output port breaks conflicts via output arbiters]. All arbiters in ordered virtual networks are queueing to maintain point-to-point ordering. All other arbiters are round-robin.

- Switch Traversal (ST): Flits that won SA traverse the crossbar switch.

- Link Traversal (LT): Flits from the crossbar traverse links to reach the next routers.

The flow-control implemented is credit-based.

- Garnet flexible-pipeline network

The garnet flexible-pipeline model should be used when one desires a router pipeline different than 5 stages (the 5 stages include the link traversal stage). All the components of a router (buffers, VC and switch allocators, switch etc) are modeled similar to the fixed-pipeline design, but the pipeline depth is not modeled, and comes as an input parameter number_of_pipe_stages. The flow-control is implemented by monitoring the availability of buffers at each output port before sending.

Life of a memory request in Ruby

In this section we will provide a high level overview of how a memory request is serviced by Ruby as a whole and what components in Ruby it goes through. For detailed operations within each components though, refer to previous sections describing each component in isolation.

- A memory request from a core or hardware context of M5 enters the jurisdiction of Ruby through the RubyPort::recvTiming interface (in src/mem/ruby/system/RubyPort.hh/cc). The number of Rubyport instantiation in the simulated system is equal to the number of hardware thread context or cores (in case of non-multithreaded cores). A port from the side of each core is tied to a corresponding RubyPort.

- The memory request arrives as a M5 packet and RubyPort is responsible for converting it to a RubyRequest object that is understood by various components of Ruby. It also finds out if the request is for some PIO or not and maneuvers the packet to correct PIO. Finally once it has generated the corresponding RubyRequest object and ascertained that the request is a normal memory request (not PIO access), it passes the request to the Sequencer::makeRequest interface of the attached Sequencer object with the port (variable ruby_port holds the pointer to it). Observe that Sequencer class itself is a derived class from the RubyPort class.

- As mentioned in the section describing Sequencer class of Ruby, there are as many objects of Sequencer in a simulated system as the number of hardware thread context (which is also equal to the number of RubyPort object in the system) and there is one-to-one mapping between the Sequencer objects and the hardware thread context. Once a memory request arrives at the Sequencer::makeRequest, it does various accounting and resource allocation for the request and finally pushes the request to the Ruby's coherent cache hierarchy for satisfying the request while accounting for the delay in servicing the same. The request is pushed to the Cache hierarchy by enqueueing the request to the mandatory queue after accounting for L1 cache access latency. The mandatory queue (variable name m_mandatory_q_ptr) effectively acts as the interface between the Sequencer and the SLICC generated cache coherence files.

- L1 cache controllers (generated by SLICC according to the coherence protocol specifications) dequeues request from the mandatory queue and looks up the cache, makes necessary coherence state transitions and/or pushes the request to the next level of cache hierarchy as per the requirements. Different controller and components of SLICC generated Ruby code communicates among themselves through instantiations of MessageBuffer class of Ruby (src/mem/ruby/buffers/MessageBuffer.cc/hh) , which can act as ordered or unordered buffer or queues. Also the delays in servicing different steps for satisfying a memory request gets accounted for scheduling enqueue-ing and dequeue-ing operations accordingly. If the requested cache block may be found in L1 caches and with required coherence permissions then the request is satisfied and immediately returned. Otherwise the request is pushed to the next level of cache hierarchy through MessageBuffer. A request can go all the way up to the Ruby's Memory Controller (also called Directory in many protocols). Once the request get satisfied it is pushed upwards in the hierarchy through MessageBuffers.

- Once the requested cache block is available at L1 cache with desired coherence permissions, the L1 cache controller informs the corresponding Sequencer object by calling its readCallback or 'writeCallback method depending upon the type of the request. Note that by the time these methods on Sequencer are called the latency of servicing the request has been implicitly accounted for.

- The Sequencer then clears up the accounting information for the corresponding request and then calls the RubyPort::ruby_hit_callback method. This ultimately returns the result of the request to the corresponding port of the core/ hardware context of the frontend (M5).